- Faculty: Armando Fox, Marti Hearst

- Serena Caraco (PhD, advised by Armando Fox)

- Nelson Lojo (MS, advised by Armando Fox)

- Prof. Mike Verdicchio, The Citadel Military College of Virginia

- Nate Weinman (PhD 2022, advised by Armando Fox and Marti Hearst, now at Figma)

- Eliane Wiese (postdoc 2018, now at University of Utah)

- Undergraduate researchers: Brian Hsu, Alexia Camacho

Introducing Faded Parsons Problems

Our earlier work on AutoStyle revealed that the students who benefited least from automatic coding-style hints are those who began with a poor high-level structure or strategy for the coding problem. This led us to ask: how can we teach/scaffold/verify the process by which a student produces a high-level strategy? Often, the student was unaware of a particular programming idiom or pattern that could be used to solve the problem more elegantly. Programming patterns (sometimes called plans, schemata, templates) are higher-level, partial implementations of reusable higher-level programming concepts or strategies that achieve some goal. For example, an introductory-level programming pattern is “premature return from a loop”: searching a collection until some element satisfies a predicate, then breaking out of the loop to immediately return that element, with a catch-all return after the loop in case no match is found. According to cognitive theory, when people view example problems with identifiable similarities, they eventually construct, store, and are able to recall and reuse a complex pattern when solving a problem that fits the pattern, rather than constructing the problem’s solution from scratch. Learning to recognize and apply patterns is therefore critical to becoming a proficient software engineer. But in traditional code-writing exercises, if a student doesn’t already know the pattern (and recognize the opportunity to use it), the student is now faced with having to simultaneously learn and understand the concept behind the pattern (e.g. “premature return from a loop”) and the specific syntax required to adapt the pattern to a variety of situations. To address this challenge, we developed Faded Parsons Problems [1]. Original Parsons Problems require students to unscramble lines of code to form a working solution; Faded Parsons Problems also allow the instructor to replace some tokens in the given code with blanks, gradually shifting the focus from reconstructing an expert’s solution to code-writing. With few or no blanks, the student can focus on learning the pattern without worrying about constructing the right syntax; as blanks are introduced, the student must fill in more and more of the code, learning the syntax as they go. In contrast to regular Parsons Problems, whose learning gains do not necessarily transfer to code-writing tasks [1], Faded Parsons Problems were specifically found to improve code-writing skills as well as or better than code-writing exercises [2]. They are also valuable in exposing students to new programming patterns by reconstructing expert solutions that use them. This short video shows the student experience of solving a Faded Parsons Problem.Teaching Test-Writing Using Faded Parsons Problems

Testing is a critical skill in real-world software engineering, yet is woefully underemphasized in many CS programs. Testing is much more complex than just calling a leaf function and checking the return values: correctly stimulating part of the system under test may require setting up preconditions, controlling the return values of helper functions it calls (i.e. stubbing), providing “test double” objects to fill roles that are necessary for the subject code to run but are not the focus of the test itself (i.e. mock objects), and so on. Real-world testing libraries often include sophisticated functions to help with such tasks. Learning to write tests therefore presents two challenges:- Conceptual: how do I set up and control the environment in which the subject code runs, in order to isolate the specific behavior being tested?

- Syntactic: how do I make use of the facilities of testing libraries to accomplish those goals?

Scaffolding Subgoal Decomposition for Complex Software Tasks

Another challenge area for CS100-level students is working with large codebases, especially those in which control flow and data ownership are distributed across multiple subsystems. For example, in a Model-View-Controller framework such as Rails, adding a new feature to an app typically requires adding code in one or more models, one or more views, and one or more controllers, plus possibly helper code in other places. Novices don’t always know what responsibilities belong where, and if they start writing code without this understanding, the result may violate architectural conventions that makes their code harder to understand, test, and maintain. Another example from the SaaS world is third-party authentication (“Login with Google”): there is a complex workflow in which control shifts among various places in the app that the user is signing into and the third-party authentication provider. Yet another example is the flow of control and data when a Web page makes an AJAX call to a server and must register a callback to receive data from the server. In all these cases, the software developer must know:- What are the modules or subsystems involved in the operation, and how does control shift across them during the operation?

- What data is expected/consumed by each subsystem, and by which subsystem was it created?

Example: Step 1

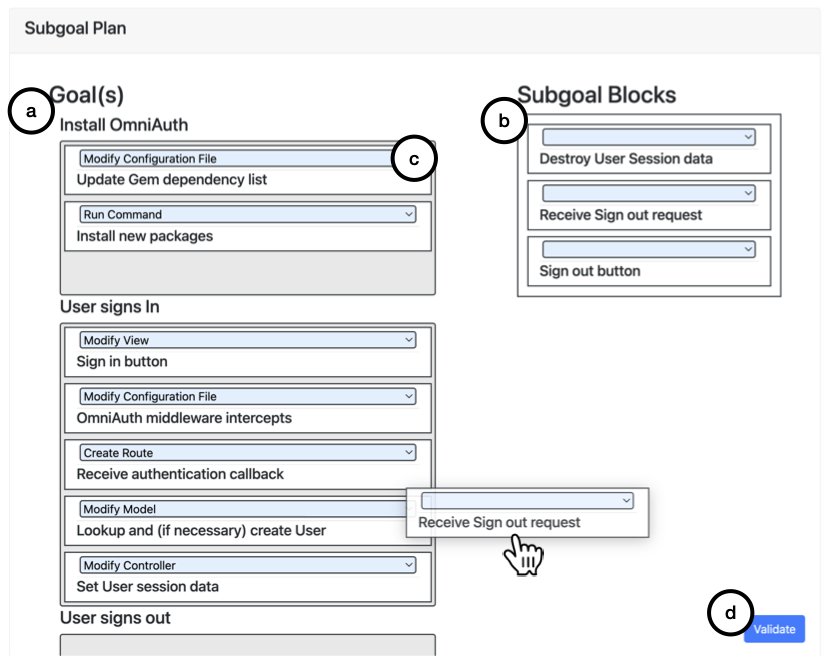

Here is a typical two-step exercise based on the Rails SaaS framework, which implements the Model–View–Controller architecture. The overall task here is to add third-party authentication via the OmniAuth library to an existing app. In the first step of the exercise, students must first drag each subgoal block (b) to the correct higher-level goal (a) and arrange the goals and subgoals in the correct order. The dropdown menus (c) are used for the student to indicate where in the app the corresponding code will eventually go (model, view, controller, configuration files, or just running a command to make something happen).

Example: Step 2

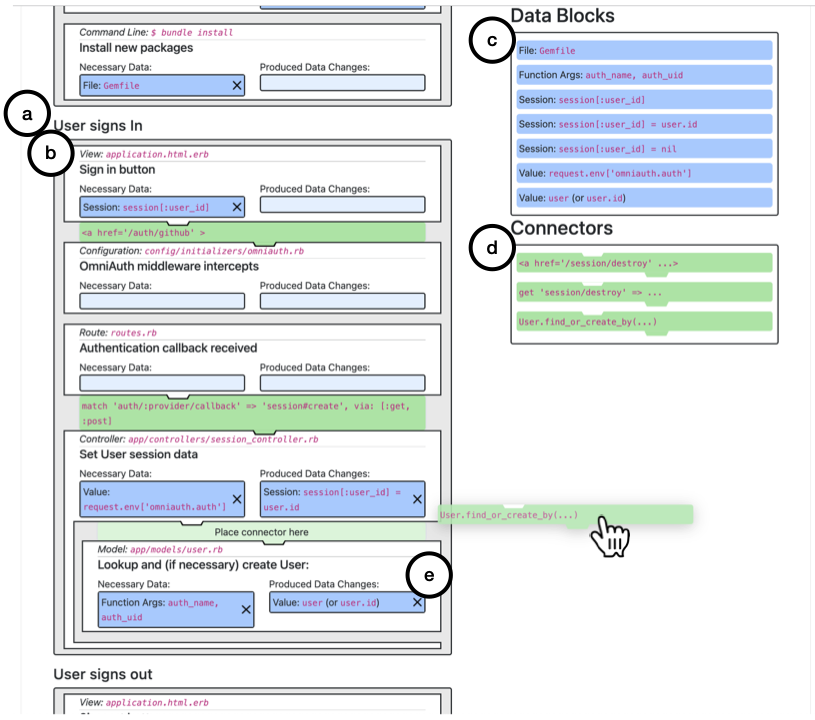

Then, given the correctly-ordered goals and subgoals (a), new containers appear inside each subgoal (b) to indicate what data is consumed/produced as a result of that subgoal step; students must drag correct Data Blocks (d) to fill in these containers, using the ‘X’ button (e) to delete incorrectly-placed blocks. This tool exists in prototype form, but our plan is to incorporate it into the PrairieLearn framework, as we have done with the Faded Parsons Problem element.

This tool exists in prototype form, but our plan is to incorporate it into the PrairieLearn framework, as we have done with the Faded Parsons Problem element.

Support acknowledgment: This work is supported by a National Science Foundation Graduate Fellowship grant #DGE 1752814, and by grants #OPR19186 and #00005919 from the California Education Learning Lab, an initiative of the California Governor’s Office of Planning and Research.

[1] ![[doi]](https://acelab.berkeley.edu/wp-content/plugins/papercite/img/external.png) Weinman, Nathaniel, Armando Fox, and Marti Hearst. 2020. Exploring challenging variations of parsons problems. Paper read at Proceedings of the 51st ACM technical symposium on computer science educationat New York, NY, USA.

Weinman, Nathaniel, Armando Fox, and Marti Hearst. 2020. Exploring challenging variations of parsons problems. Paper read at Proceedings of the 51st ACM technical symposium on computer science educationat New York, NY, USA.

[Bibtex]

[Bibtex]

@inproceedings{Weinman2020,

doi = {10.1145/3328778.3372639},

url = {https://doi.org/10.1145/3328778.3372639},

year = {2020},

month = feb,

publisher = {{ACM}},

address = {New York, NY, USA},

author = {Nathaniel Weinman and Armando Fox and Marti Hearst},

title = {Exploring Challenging Variations of Parsons Problems},

pages = {1349},

numpages = {1},

booktitle = {Proceedings of the 51st {ACM} Technical Symposium on Computer Science Education}

}[2] ![[pdf]](https://acelab.berkeley.edu/wp-content/plugins/papercite/img/pdf.png) Weinman, Nathaniel, Armando Fox, and Marti A. Hearst. 2021. Improving instruction of programming patterns with faded Parsons problems. Paper read at 2021 ACM CHI virtual conference on human factors in computing systems (CHI 2021)at Yokohama, Japan (online virtual conference).

Weinman, Nathaniel, Armando Fox, and Marti A. Hearst. 2021. Improving instruction of programming patterns with faded Parsons problems. Paper read at 2021 ACM CHI virtual conference on human factors in computing systems (CHI 2021)at Yokohama, Japan (online virtual conference).

[Bibtex]

![[pdf]](https://acelab.berkeley.edu/wp-content/plugins/papercite/img/pdf.png) Weinman, Nathaniel, Armando Fox, and Marti A. Hearst. 2021. Improving instruction of programming patterns with faded Parsons problems. Paper read at 2021 ACM CHI virtual conference on human factors in computing systems (CHI 2021)at Yokohama, Japan (online virtual conference).

Weinman, Nathaniel, Armando Fox, and Marti A. Hearst. 2021. Improving instruction of programming patterns with faded Parsons problems. Paper read at 2021 ACM CHI virtual conference on human factors in computing systems (CHI 2021)at Yokohama, Japan (online virtual conference). [Bibtex]

@InProceedings{parsons-chi2021,

author = {Nathaniel Weinman and Armando Fox and Marti A. Hearst},

title = {Improving Instruction of Programming Patterns With Faded {P}arsons Problems},

booktitle = {2021 {ACM} {CHI} Virtual Conference on Human Factors in Computing Systems ({CHI} 2021)},

year = 2021,

month = {May},

address = {Yokohama, Japan (online virtual conference)}}[4] ![[pdf]](https://acelab.berkeley.edu/wp-content/plugins/papercite/img/pdf.png) Lojo, Nelson and Armando Fox. 2022. Teaching test-writing as a variably-scaffolded programming pattern. Paper read at 27th annual conference on innovation and technology in computer science education (ITiCSE 2018)at Dublin, Ireland.

Lojo, Nelson and Armando Fox. 2022. Teaching test-writing as a variably-scaffolded programming pattern. Paper read at 27th annual conference on innovation and technology in computer science education (ITiCSE 2018)at Dublin, Ireland.

[Bibtex]

![[pdf]](https://acelab.berkeley.edu/wp-content/plugins/papercite/img/pdf.png) Lojo, Nelson and Armando Fox. 2022. Teaching test-writing as a variably-scaffolded programming pattern. Paper read at 27th annual conference on innovation and technology in computer science education (ITiCSE 2018)at Dublin, Ireland.

Lojo, Nelson and Armando Fox. 2022. Teaching test-writing as a variably-scaffolded programming pattern. Paper read at 27th annual conference on innovation and technology in computer science education (ITiCSE 2018)at Dublin, Ireland. [Bibtex]

@InProceedings{rspec-faded-parsons,

author = {Nelson Lojo and Armando Fox},

title = {Teaching Test-Writing As a Variably-Scaffolded Programming Pattern},

booktitle = {27th Annual Conference on Innovation and Technology in Computer Science Education ({ITiCSE} 2018)},

year = 2022,

month = 6,

address = {Dublin, Ireland},

note = {30\% accept rate}}